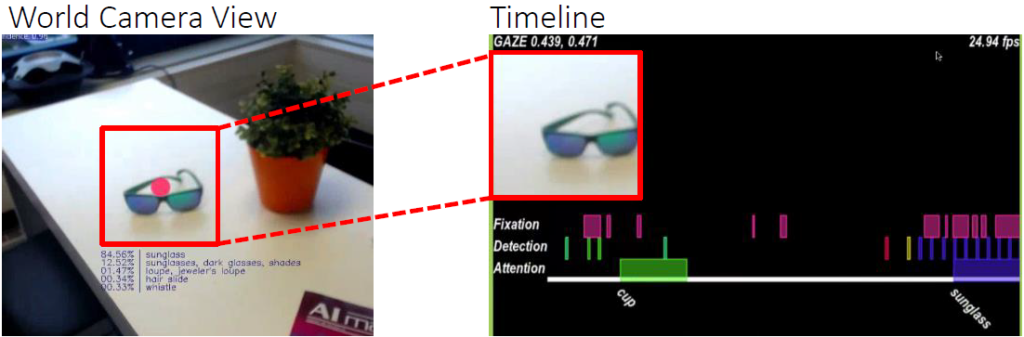

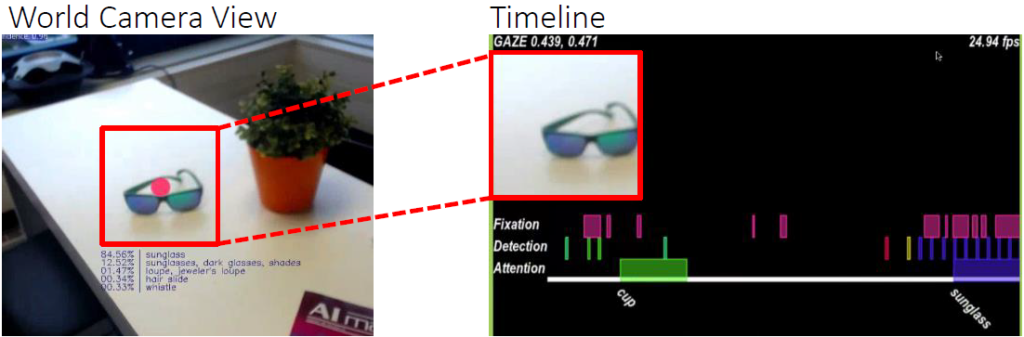

Recently, we published a prototype for gaze-guided object classification at UbiComp conference 2016. This topic also raised interest of Pupil Labs, the manufacturer of the applied eye tracking device.

Gaze-guided Object Classification Demo

Recently, we published a prototype for gaze-guided object classification at UbiComp conference 2016. This topic also raised interest of Pupil Labs, the manufacturer of the applied eye tracking device.

Gaze-guided Object Classification Demo

The WaterCoaster started as a seminar project (Gamified Life) comprising the design of a hardware prototype and a mobile app measuring the water intake of humans. Applying gamification elements, we wanted to persuade the user to drink more frequently and to drink a healthier amount of water during work time. We published the results as late breaking work at CHI 2016

The gaze estimation error is inherent in head-mounted eye trackers and seriously impacts performance, usability, and user experience of gaze-based interfaces. Particularly in mobile settings, this error varies constantly as users move in front and look at different parts of a display. We envision a new class of gaze-based interfaces that are aware of the gaze estimation error and adapt to it in real time. As a first step towards this vision, we introduce an error model that is able to predict the gaze estimation error. Our method covers major building blocks of mobile gaze estimation, specifically mapping of pupil positions to scene camera coordinates, marker-based display detection, and mapping of gaze from scene camera to on-screen coordinates. We develop our model through a series of principled measurements of a state-of-the-art head-mounted eye tracker. Continue reading

Multi-touch surfaces enable highly interactive and intuitive applications. Nevertheless large devices are also constrained. It’s possible that users cannot reach every part of the display without walking around or leaning on the surface. To compensate this restriction, I present a method to use mobile eyetracking as an additional input modality. In particular I propose an approach relying on marker-based display recognition and homogeneous transformations. In a user study I evaluated the implementation in terms of accuracy. As result I extracted some design guidelines for building interfaces and considered how to solve limitations of the proposed system.