Computational Modelling and Prediction of Gaze Estimation Error for Head-mounted Eye Trackers

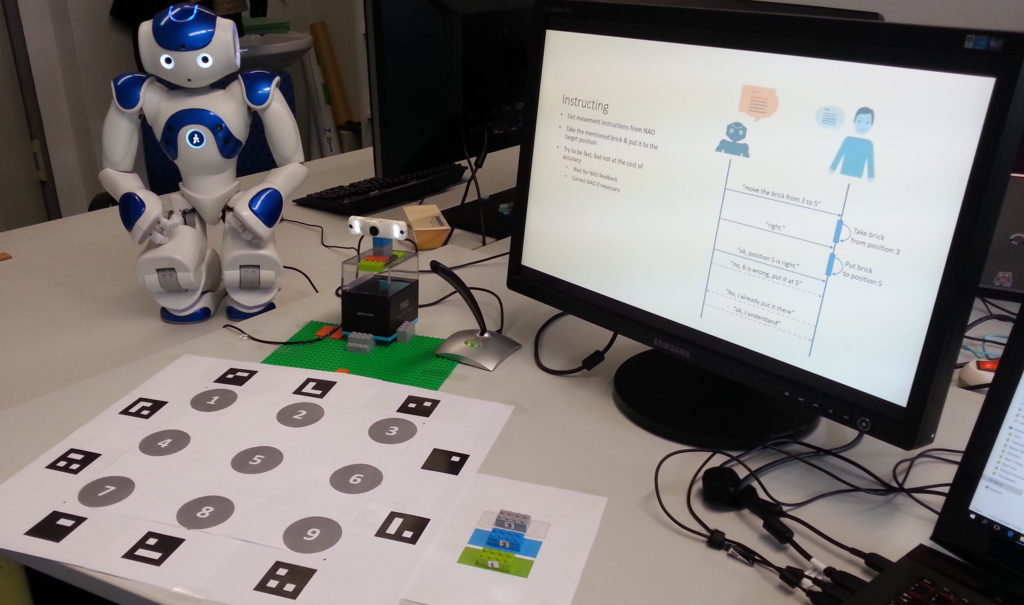

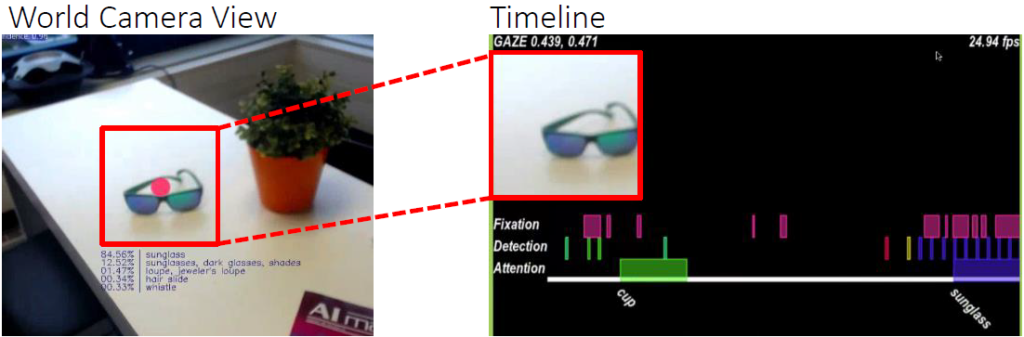

The gaze estimation error is inherent in head-mounted eye trackers and seriously impacts performance, usability, and user experience of gaze-based interfaces. Particularly in mobile settings, this error varies constantly as users move in front and look at different parts of a display. We envision a new class of gaze-based interfaces that are aware of the gaze estimation error and adapt to it in real time. As a first step towards this vision, we introduce an error model that is able to predict the gaze estimation error. Our method covers major building blocks of mobile gaze estimation, specifically mapping of pupil positions to scene camera coordinates, marker-based display detection, and mapping of gaze from scene camera to on-screen coordinates. We develop our model through a series of principled measurements of a state-of-the-art head-mounted eye tracker. Continue reading